I love working with the Internet Archive’s collections, especially the growing book collection. As an engineer and sometimes scholar, I know there’s a lot of human knowledge inside books that’s difficult to discover. What new things could we do to help our users discover knowledge in books?

I love working with the Internet Archive’s collections, especially the growing book collection. As an engineer and sometimes scholar, I know there’s a lot of human knowledge inside books that’s difficult to discover. What new things could we do to help our users discover knowledge in books?

Today, most people access books through card catalog search and full-text search — both essentially 20th century technologies. If you ask for something broad or ambiguous, because you don’t know what you’re looking for yet, any attempt to present a short list of the most relevant results is likely to be overly narrow, not inspiring discovery or serendipity.

For the past few months, I’ve been experimenting with a new way to visualize book contents. This experiment starts with one simple idea: Most sentences contain related things. If I see a concept and a year together in a sentence, the odds are that the two are related. Consider this sentence:

A new, Gregorian Calendar, was introduced by Pope Gregory XIII in 1582.

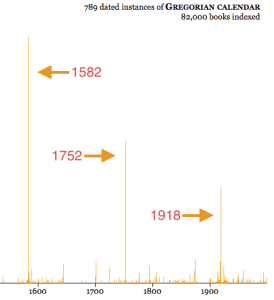

I’ll explain in a minute how I figured out that Gregorian Calendar and Pope Gregory XIII are things, and that 1582 is a year. Given that, what can we learn from the sentence? We can guess that these things and the year are probably associated with each other. This guess is sometimes wrong, but let’s try adding together data from around a hundred thousand books and see what happens:

Three years have a relatively large number of sentences containing “Gregorian calendar” and that year. Are these important dates in the history of the Gregorian Calendar? Yes: in 1582, Pope Gregory XIII had Catholic countries adopt this new calendar, replacing the Julian calendar. In 1752, England adopted it, and in 1918, after the Russian Revolution, Bolshevik Russia adopted it.

Let’s take a look at some of the actual book sentences from the most popular year, 1582:

1582

The Cambridge handbook of physics formulas

The routine is designed around FORTRAN or C integer arithmetic and is valid for dates from the onset of the Gregorian calendar, 15 October 1582.

The Cambridge history of English literature, 1660-1780

In 1582 Pope Gregory XIII (hence the name Gregorian

Calendar) ordered ten days to be dropped from October to make up for the errors that had crept into the so-called Julian Calendar instituted by Julius Caesar, which made the year too long and added a day every one hundred and twenty-eight years.They give year, month, and day in cyclical characters and their equivalent in the Western calendar (using the modern Gregorian calendar even for pre-1582 dates).

The crest of the peacock : non-European roots of mathematics

Clavius was a member of the commission that ultimately reformed the Gregorian calendar in 1582.

You can give the experiment a try at https://books.archivelab.org/dateviz/.

Now that you’ve seen what the experiment looks like, let’s look at some of the details of building this visualization. (The code can be found on GitHub at https://github.com/wumpus/visigoth/.)

We need a way to find dates in sentences. Sometimes it’s obvious that something is a date: “January 31, 2016” or “Jan 2016.” Other times it’s more ambiguous: a 4 digit number might be a year, or it might be a section of a US law (“15 U.S.C. § 1692”), or a page number in a book. What I ended up doing was creating a series of patterns (see https://github.com/wumpus/visigoth/blob/master/visigoth/dateparse.py) that look for English helper words (“In 2016”, “before 1812”) before guessing that a 4-digit number is a date. While this technique has both false positives and false negatives, it works well enough not to hurt the visualization significantly.

The next item is generating the list of things (people, places, concepts, etc.) in a sentence. There are many techniques for doing this, ranging from computationally-expensive machine-learning libraries like the Stanford NER library, to using human-generated lists such as the US Library of Congress Name Authority Files. There’s also the complication of disambiguating things like “John Smith.” (Which “John Smith” of the hundreds do we mean?) To match the simple nature of the other algorithms in this experiment, I decided to use a very simple dataset: English Wikipedia article titles. Not only is this a comprehensive collection of encyclopedic things, but there are numerous human-generated “redirects,” which provide a list of synonyms for most article titles. For example, “Western calendar” is a redirect to “Gregorian Calendar,” and in fact numerous books do use the term “Western calendar” to refer to the Gregorian calendar.

Our next task is ranking. Two aspects of this visualization use ranks. First, the suggestions that come up while users are typing in the “thing” box are ordered by Wikipedia article popularity. Eventually we’ll have enough usage of this visualization that we can use our own users’ data to put suggestions in a better order. Until then, using Wikipedia popularity is a good way to make suggestions more relevant.

A ranking of the books themselves is useful in two ways. First, it’s used to pick which example sentences are shown for a given pair of thing/date. Second, given that I only had enough computational resources to process a fraction of the scanned books in the Internet Archive’s collection, I chose 82,000 books using the same ranking scheme. This ranking scheme doesn’t have to be that good in order to deliver a lot of benefit, so I chose a superficial approach of awarding points to academic book publishing houses, book references in Wikipedia articles, and book popularity data from Better World Books, which is a used bookseller & a partner of the Internet Archive.

What’s the result of the experiment? A relatively simple set of algorithms applied to a small collection of high-quality books seems to be both interesting and fun for users. As a next step, I would like to extend it to include a better list of “things”, and extract data from many more books. In a few years, we might have access to 100 times as many scanned books. By then, I hope to find several other new ways to explore book content.

This is really cool, and having played with it for a few minutes, I have a suggestion for improving it a little.

For more modern topics, I was wondering why there seems to be a small spike just before the correct first date (For instance: Harriet Tubman, born in 1820, has a false-positive spike in 1800; ARPANET, founded in 1969, has one in 1960.) It’s clear now that these are result of sources that mention the decade or century in which a thing belongs to, and these seem to (almost?) always be in the form of for instance, “1950s” or “1900s.” A nice enhancement might be detecting years that end in “s” and interpreting/displaying them them as spans — decades or centuries, as opposed to single years on the timeline.

Of course, there might be some issue with figuring out whether something like “1900s” refers to the century or the first decade of that century, but… well, it’s a start.

Thanks for the suggestion, Everett. I do already parse dates like “1800s” and date ranges like “1941-5”. As you’ve guessed, I’m currently treating 1800s as being the year 1800, and not the century. I was at a bit of a loss as to mix centuries, decades, and arbitrary date ranges into the bar graph. It’s definitely something that I’d like to figure out!

Hi, Greg, very nice job. I’m sure that Eric L. Morgan (will) appreciate(s) it a lot.

About “Can I change the X axis to be something other than the years from 1000 CE to 2016 CE?”, maybe a simple solution is to allow to view books related to a specific year adding a field where the user can input the year he is interested in, instead of clicking on the year in the graph (that is a difficult task due to the one pixel position of the mouse, not to mention the tap on a touch pad).

Thx. sb

Stefano, thanks for the idea, that could be a good workaround!

Hi Greg,

Disambiguation is difficult…I wondered if it would be possible to exclude specific dates or hits that are unrelated to the “person” you are searching for? (I searched John Glenn)

Also might there be a way to zoom in on the time span of hits rather than always seeing the entire date range?

ge

Glynn,

If anything I’ve made the disambiguation problem harder by using synonyms! I couldn’t think of anything easy to do, and previous research on disambiguation uses pretty complicated techniques. I’m tempted to try clustering, but that risks splitting people into multiple clusters, if they’ve been written about in multiple contexts — e.g. Isaac Newton the scientist vs. Isaac Newton, alchemist.

Thanks, Greg. This is great. In playing around with this, with ngram, the visualizations in Open Library, and other similar data, I’m wondering how we can take into account the incredible increase in publishing that has taken place in the last half century? Any data graph is pretty skewed toward modern books because there are so darned many of them compared to earlier years. I realize that your experiment here works regardless, but one sees the trend in the resulting graphs. Would love to hear ideas on how we can adjust for that.

Karen,

Since Google ngram is using the publication date of the book, they’ve normalized their results to reduce the effect you mention. The effect in this experiment is more subtle: since my book ranking signals are skewed towards more modern books, I’m skewed towards more modern scholarship. And there’s no good way to normalize for that!

Very nice experiment. Compliment. Just another feedback.

Related to Evrette’s comment, I think the system does not reflect the comma etc in sentences, which would differ from what the users may expect.

For example,

1) “Probably sighted by French explorer Antoine de la Roche in 1675, South Georgia was named after George III by Captain James Cook, the first European to set foot on it a hundred years later.”

1675 is associated with James Cook (search term).

2) “Contact with European explorers dates from the visit of Tasman in 1603, but there were no substantial encounters between the indigenous inhabitants (the Maori) and Europeans until the voyages of James Cook between 1769 and 1778.”

1603 is shown for James Cook who was born in 1728.

It would be excellent if you could filter this kind distortion out (and/or opt-out by the users). Perhaps some parameter change functions could be provided, so that the users themselves can optimise the results on their own risk.

Thank you for your feedback! You are correct that I have done nothing with the grammar other than split paragraphs into sentences and label dates. I am aware of software that analyzes the structure of English sentences, but all of the packages I’ve examined are very computationally expensive and aren’t very accurate. Perhaps in another 5-10 years?

For the examples that you give, in the current user interface of this experiment, it’s fairly easy for a user to see that the dates 1675 and 1603 aren’t relevant to James Cook, after examining the sentences. This is similar to web search engines showing “snippets” for results with appropriate words bold-faced.

Thanks! True to spot the not-quite-right by hand. But, it is still extra works/clicks for the users. I would say there is another possibility for crowd-sourcing to curate/improve the results, if that is feasible in future.

You may have already investigated, but recently linguists work a lot with corpora and annotations (POS etc). For example, do you know this? http://www.cis.uni-muenchen.de/~schmid/tools/TreeTagger/

I don’t know the technical capacity of computation, but this kind of text analysis might help improving your work. As AI and deep-learning get popular and seriously impressive these days, it may not take that long to achieve. You could ask IBM WATSON. 🙂

I am not a linguist, but surrounded by many of them in Europe. If you are interested, there could be a possibility to do something together within my network? You can contact me personally in that case. Cheers.

Thanks, I’ll be in touch!

As a librarian who does some work with digitized books, I found this very interesting. Normally I am working with books in the public domain (ie, mostly not 20th century) so it was refreshing to work with 20th and even 21st century materials.

Having previously worked with nGram viewers (Google books, Chronicling America newspapers) and because of a slight visual similarity (which is probably unavoidable) with the visualization, it took me a while to work out all the differences between what you have created and an nGram viewer for digitized books/texts. nGram viewers can answer certain questions easily (“When did the use of “wheelman” for “bicyclist/cyclist” fall into disuse?”) but I often feel you need specialist technical skills to use the “smoothing” and other normalization features (which frankly I don’t have). And for digitized books, there are often metadata problems since dates in bibliographic records may be wrong of misapplied to a particular volume where the target text appeared.

Here the noise problem in particular seems very low. I guess there is the occasional problem (mentioned in another comment) with commas being a better choice for indicating discrete search units than a period, but mostly I found the results amazingly good.

The creation of a controlled vocabulary from Wikipedia seems to have worked very well. From a user perspective, having the autocomplete feature that effectively prompts users as to what is available nicely reminds the user that this is, in fact, not a free text search but for a pre-coordinate subject string (to draw on my ancient library degree, although perhaps I’m not getting it quite right).

I have two only slightly related questions.

In the 82,000 books you worked with, did you end up with a count of total sentences and then sentences that have relevant year dates in them? What percentage of the total does the set of sentences with year-dates represent?

I guess that was two questions but I find I have another.

Am I correct that you identify and connect your controlled vocabulary terms with words from text outside of the sentences that have year-dates as well as in those sentences. I did a search on Donald Trump and was surprised to get a result that had “Donald” and a year that was referring to the right Donald without “Trump” in the sentence.

Thanks.

Michael,

Keeping counts of all sentences and all sentences containing a date is a great idea, I’ll add it to my list for the next time.

As for The Donald, a Wikipedia redirect is the most likely cause. I’ve also got some code that tries to map ‘Darwin’ to ‘Charles Darwin’, given that many books give the full name the first time, and use just the last name after that.